Stopping Safely At A Railroad Crossing Still Too Much For Tesla's So-Called 'Full Self-Driving' Software

When he's not busy posting about the perils or race-mixing, Tesla CEO Elon Musk loves to tell people he's definitely for sure 100% about to really almost solve autonomy and unleash an army of robotaxis that will change transportation forever. The only problem is, the robotaxis keep crashing, and Tesla's so-called "Full Self-Driving" software still doesn't appear to be up to the task. In fact, Electrek reports yet another Tesla driver blamed the software after driving their car through the barriers guarding an active railroad crossing. Oops.

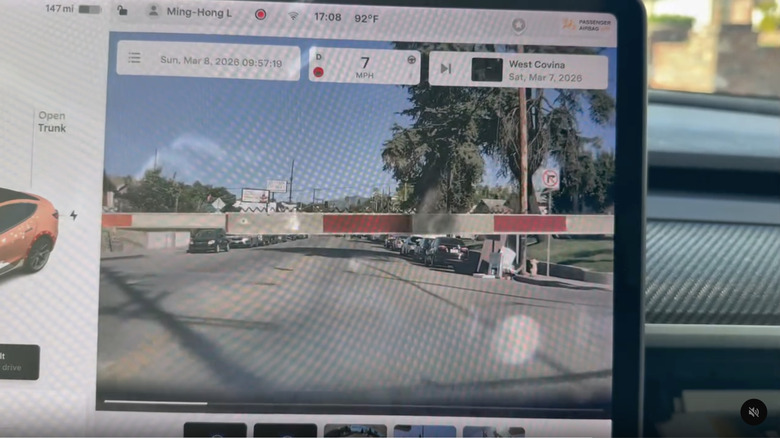

Thankfully, the driver was able to get off the tracks before the oncoming train ruined some unsuspecting paramedic's day, but as you can see in the video embedded below, the car turned onto the road with the railroad crossing, drove toward it as the arms come down, and then plowed right through them at 23 mph with zero hesitation. You know, the arms that don't drop down unless a train is coming. Change the timing just a little bit, and the morgue could have easily ended up with another body in its possession.

Is Tesla's so-called "Full Self-Driving" system just a level 2 advanced driver-assistance system that still requires the driver to pay attention at all times, making this crash the driver's fault? Absolutely. But you wouldn't know it based on the way Tesla's marketing and Musk himself talk about it like the software is actually capable of full self-driving. In fact, just a couple weeks ago, Tesla was forced to drop the "Autopilot" name from its more basic driver-assistance software to avoid more trouble after the state of California determined it was misleading.

Not the first time

If this had been the first time a negligent driver had let their Tesla's driver-assistance software drive them onto a set of railroad tracks, it would still be bad, but this is far from the first time that's happened. Last year, something similar happened in Pennsylvania, which led to the car being clipped by a train headed the opposite direction. And in January of last year, Tesla co-founder Jesse Lyu's Tesla did the same thing in Santa Monica, forcing him to run a red light in order to avoid being hit. The same thing happened to a different California driver in 2024, too.

In fact, there have been so many incidents of Tesla drivers ending up on train tracks while using their so-called "Full Self-Driving" software that, last September, Senators Ed Markey and Richard Blumenthal sent a letter to the National Highway Traffic Safety Administration urging the agency to open an investigation into the issue. If the election had gone differently a few months later, perhaps we would have seen more movement on that investigation, but then Elon got to Washington and began gutting the regulators, and now our lawmakers seem to think they have much bigger problems to deal with.

It isn't just railroad crossings that Tesla's so-called "Full Self-Driving" software has trouble detecting, either. They also love to blow by school buses, as longtime Tesla critic Dan O'Dowd's Dawn Project has demonstrated many times. Not only did the Tesla tested fail to stop for a stopped school bus with its sign out and lights flashing, it hit the child-size dummy that the system detected but decided to run over anyway. Like the railroad crossings, this isn't a new problem, either. Tesla just hasn't bothered to fix it in the years since it's learned school buses were an issue for its system.

After ignoring all those issues for so long, it's almost hard to believe Tesla's Austin robotaxis reportedly crash at a rate four times higher than human drivers. Self-driving cars, here we come!